Paper Name: Computer Organization And Architecture

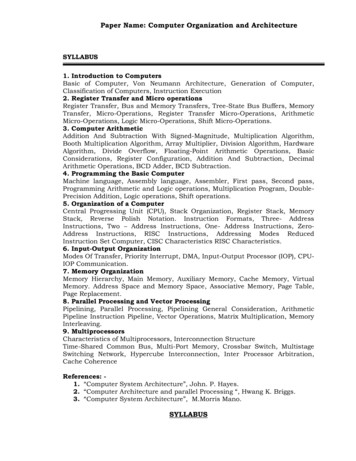

Paper Name: Computer Organization and ArchitectureSYLLABUS1. Introduction to ComputersBasic of Computer, Von Neumann Architecture, Generation of Computer,Classification of Computers, Instruction Execution2. Register Transfer and Micro operationsRegister Transfer, Bus and Memory Transfers, Tree-State Bus Buffers, MemoryTransfer, Micro-Operations, Register Transfer Micro-Operations, ArithmeticMicro-Operations, Logic Micro-Operations, Shift Micro-Operations.3. Computer ArithmeticAddition And Subtraction With Signed-Magnitude, Multiplication Algorithm,Booth Multiplication Algorithm, Array Multiplier, Division Algorithm, HardwareAlgorithm, Divide Overflow, Floating-Point Arithmetic Operations, BasicConsiderations, Register Configuration, Addition And Subtraction, DecimalArithmetic Operations, BCD Adder, BCD Subtraction.4. Programming the Basic ComputerMachine language, Assembly language, Assembler, First pass, Second pass,Programming Arithmetic and Logic operations, Multiplication Program, DoublePrecision Addition, Logic operations, Shift operations.5. Organization of a ComputerCentral Progressing Unit (CPU), Stack Organization, Register Stack, MemoryStack, Reverse Polish Notation. Instruction Formats, Three- AddressInstructions, Two – Address Instructions, One- Address Instructions, ZeroAddress Instructions, RISC Instructions, Addressing Modes ReducedInstruction Set Computer, CISC Characteristics RISC Characteristics.6. Input-Output OrganizationModes Of Transfer, Priority Interrupt, DMA, Input-Output Processor (IOP), CPUIOP Communication.7. Memory OrganizationMemory Hierarchy, Main Memory, Auxiliary Memory, Cache Memory, VirtualMemory. Address Space and Memory Space, Associative Memory, Page Table,Page Replacement.8. Parallel Processing and Vector ProcessingPipelining, Parallel Processing, Pipelining General Consideration, ArithmeticPipeline Instruction Pipeline, Vector Operations, Matrix Multiplication, MemoryInterleaving.9. MultiprocessorsCharacteristics of Multiprocessors, Interconnection StructureTime-Shared Common Bus, Multi-Port Memory, Crossbar Switch, MultistageSwitching Network, Hypercube Interconnection, Inter Processor Arbitration,Cache CoherenceReferences: 1. “Computer System Architecture”, John. P. Hayes.2. “Computer Architecture and parallel Processing “, Hwang K. Briggs.3. “Computer System Architecture”, M.Morris Mano.SYLLABUS

Paper Name: Computer Organization and ArchitectureIntroduction to ComputersBasic of Computer, Von Neumann Architecture, Generation Of Computer, ClassificationOf Computers, Instruction Execution.Register Transfer and Micro operationsRegister transfer, Bus and Memory Transfers, tree-state bus buffers, Memory transfer,Micro-Operations , Register transfer Micro-Operations, Arithmetic Micro-Operations ,Logic Micro-Operations, Shift Micro-Operations.Computer ArithmeticAddition and subtraction with signed-magnitude, Multiplication algorithm, Boothmultiplication algorithm, Array multiplier, Division algorithm, Hardware algorithm,Divide Overflow , Floating-point Arithmetic operations,Basic considerations, Register configuration, Addition and subtraction, DecimalArithmetic operations, BCD adder, BCD subtraction.Programming the Basic ComputerMachine language, Assembly language, Assembler, First pass, Second pass,Programming Arithmetic and Logic operations, Multiplication Program, DoublePrecision Addition, Logic operations, Shift operations,.Organization of a ComputerCentral Progressing Unit (CPU), Stack organization, Register stack, Memory stack,Reverse polish notation .Instruction Formats, Three- address Instructions, Two –address instructions, One- address instructions, Zero-address instructions, RISCInstructions, Addressing Modes Reduced Instruction Set Computer, CISCcharacteristics RISC characteristics.Input-Output Organization

Paper Name: Computer Organization and ArchitectureModes of transfer, Priority interrupt, DMA, Input-Output Processor (IOP), CPU-IOPCommunication.Memory OrganizationMemory hierarchy, Main memory, Auxiliary memory, Cache memory, Virtual memory.Address Space and Memory Space, Associative memory ,Page tablePage Replacement.Introduction to Parallel ProcessingPipelining, Parallel processing , Pipelining general consideration, Arithmetic pipelineInstruction pipeline .Vector ProcessingVector operations, Matrix multiplication, Memory interleaving.MultiprocessorsCharacteristics of multiprocessors, Interconnection structureTime-shared common bus, Multi-port memory ,Crossbar switch ,Multistage switchingnetwork, Hypercube interconnection, Inter processor arbitration , Cache coherence

Paper Name: Computer Organization and ArchitectureTABLE OF CONTENTSUnit 1 : Introduction to ComputersComputer: An Introduction1.1Introduction1.2What Is Computer1.3Von Neumann Architecture1.4Generation Of Computer1.4.1 Mechanical Computers (1623-1945)1.4.2 Pascaline1.4.3 Difference Engine1.4.4 Analytical Engine1.4.5 Harvard Mark I And The Bug1.4.6 First Generation Computers(1937-1953)1.4.7 Second Generation Computers (1954-1962)1.4.8 Third Generation Computers (1963-1972)1.4.9 Fourth Generation Computers (1972-1984)1.4.10 Fifth Generation Computers(1984-1990)1.4.11 Later Generations(1990 -)1.5Classification Of Computers1.5.1 Micro Computer1.5.2 Mini Computer1.5.3 Mainframe Computer1.5.4 Super ComputerUnit 2: Register Transfer and Micro operations2.1Register transfer2.2Bus and Memory Transfers2.2.1 tree-state bus buffers2.2.2 Memory transfer2.3Micro-Operations2.3.1 Register transfer Micro-Operations2.3.2 Arithmetic Micro-Operations2.3.3 Logic Micro-Operations2.3.4 Shift Micro-OperationsUnit 3 : Programming elements3.13.23.3Computer ArithmeticAddition and subtraction with signed-magnitudeMultiplication algorithm3.1.1 Booth multiplication algorithm3.1.2 Array multiplier

Paper Name: Computer Organization and Architecture3.2.3Division algorithm3.2.3.1 Hardware algorithm3.2.3.2 Divide Overflow3.4Floating-point Arithmetic operations3.4.1 Basic considerations3.4.2 Register configuration3.4.3 Addition and subtraction3.5Decimal Arithmetic operations3.5.1 BCD adder3.5.2 BCD subtractionUnit 4 : Programming the Basic Computer4.1Machine language4.2Assembly language4.3Assembler4.3.1 First pass4.3.2 Second pass4.4Programming Arithmetic and Logic operations4.4.1 Multiplication Program4.4.2 Double-Precision Addition4.4.3 Logic operations4.4.4 Shift operationsUnit 5 : Central Progressing Unit (CPU)5.1Stack organization5.1.1Register stack5.1.2Memory stack5.1.3Reverse polish notation5.2Instruction Formats5.2.1Three- address Instructions5.2.2Two – address instructions5.2.3One- address instructions5.2.4Zero-address instructions5.2.5RISC Instructions5.3Addressing Modes5.4Reduced Instruction Set Computer5.4.1CISC characteristics5.4.2RISC characteristicsUnit 6: Input-Output Organization6.1Modes of transfer6.1.1Programmed I/O6.1.2Interrupt-Initiated I/O6.2Priority interrupt6.2.1Daisy-chaining priority6.2.2Parallel priority interrupt6.2.3Interrupt cycle

Paper Name: Computer Organization and Architecture6.3DMA6.3.1DMA Controller6.3.2DMA Transfer6.4Input-Output Processor (IOP)6.1.1CPU-IOP Communication6.1.2Serial Communication6.1.3Character-Oriented Protocol6.1.4Bit-Oriented Protocol6.5 Modes of transferUnit-7 Memory Organization7.1Memory hierarchy7.2Main memory7.2.1RAM and ROM chips7.2.2Memory Address Map7.3Auxiliary memory7.3.1Magnetic disks7.3.2Magnetic Tape7.4Cache memory7.4.1 Direct Mapping7.4.2 Associative Mapping7.4.3 Set- associative Mapping7.4.4 Virtual memory7.4.5 Associative memory Page table7.4.6 Page ReplacementUNIT 8 : Introduction to Parallel Processing8.1Pipelining8.1.1 Parallel processing8.1.2 Pipelining general consideration8.1.3 Arithmetic pipeline8.1.4 Instruction pipelineUnit 9: Victor Processing9.1Vector operations9.2Matrix multiplication9.3Memory interleavingUNIT 10 : Multiprocess10.1Characteristics of multiprocessors10.2Interconnection structure10.2.1 Time-shared common bus10.2.2 Multi-port memory10.2.3 Crossbar switch10.2.4 Multistage switching network10.2.5 Hypercube interconnection

Paper Name: Computer Organization and Architecture10.310.410.5Inter processor arbitrationCache coherenceInstruction Execution

Paper Name: Computer Organization and ArchitectureUNIT 1INTRODUCTION TO COMPUTERSComputer: An Introduction1.1 Introduction1.2 What Is Computer1.3 Von Neumann Architecture1.4 Generation Of Computer1.4.1 Mechanical Computers (1623-1945)1.4.2 Pascaline1.4.3 Difference Engine1.4.4 Analytical Engine1.4.5 Harvard Mark I And The Bug1.4.6 First Generation Computers(1937-1953)1.4.7 Second Generation Computers (1954-1962)1.4.8 Third Generation Computers (1963-1972)1.4.9 Fourth Generation Computers (1972-1984)1.4.10 Fifth Generation Computers(1984-1990)1.4.11 Later Generations(1990 -)1.5 Classification Of Computers1.5.1 Micro Computer1.5.2 Mini Computer1.5.3 Mainframe Computer1.5.4 Super Computer1.1IntroductionComputer is one of the major components of an Information Technology network andgaining increasing popularity. Today, computer technology has permeated every sphereof existence of modern man. In this block, we will introduce you to the computerhardware technology, how does it work and what is it? In addition we will also try todiscuss some of the terminology closely linked with Information Technology andcomputers.1.2WHAT IS COMPUTER?Computer is defined in the Oxford dictionary as “An automatic electronic apparatus formaking calculations or controlling operations that are expressible in numerical or

Paper Name: Computer Organization and Architecturelogical terms” . A device that accepts data1, processes the data according to theinstructions provided by the user, and finally returns the results to the user andusually consists of input, output, storage, arithmetic, logic, and control units. Thecomputer can store and manipulate large quantities of data at very high speedThe basic function performed by a computer is the execution of a program. A programis a sequence of instructions, which operates on data to perform certain tasks. Inmodern digital computers data is represented in binary form by using two symbols 0and 1, which are called binary digits or bits. But the data which we deal with consistsof numeric data and characters such as decimal digits 0 to 9, alphabets A to Z,arithmetic operators (e.g. , -, etc.), relations operators (e.g. , , etc.), and many otherspecial characters (e.g.;,@,{,],etc.). Thus, collection of eight bits is called a byte. Thus,one byte is used to represent one character internally. Most computers use two bytes orfour bytes to represent numbers (positive and negative) internally. Another term, whichis commonly used in computer, is a Word. A word may be defined as a unit ofinformation, which a computer can process, or transfer at a time. A word, generally, isequal to the number of bits transferred between the central processing unit and themain memory in a single step. It ma also be defined as the basic unit of storage ofinteger data in a computer. Normally, a word may be equal to 8, 16, 32 or 64 bits .Theterms like 32 bit computer, 64 bit computers etc. basically points out the word size ofthe computer.1.3 VON NEUMANN ARCHITECTUREMost of today’s computer designs are based on concepts developed by John vonNeumann referred to as the VON NEUMANN ARCHITECTURE. Von Neumann proposedthat there should be a unit performing arithmetic and logical operation on the data.This unit is termed as Arithmetic Logic (ALU). One of the ways to provide instruction tosuch computer will be by connecting various logic components in such a fashion, thatthey produce the desired output for a given set of inputs. The process of connectingvarious logic components in specific configuration to achieve desired results is calledProgramming. This programming since is achieved by providing instruction withinhardware by various connections is termed as Hardwired. But this is a very inflexibleprocess of programming. Let us have a general configuration for arithmetic and logicalfunctions. In such a case there is a need of a control signal, which directs the ALU toperformed a specific arithmetic or logic function on the data. Therefore, in such asystem, by changing the control signal the desired function can be performed on data.1Representation of facts, concepts, or instructions in a formalized manner suitable forcommunication, interpretation, or processing by humans or by automatic means. Anyrepresentations such as characters or analog quantities to which meaning is or might beassigned

Paper Name: Computer Organization and ArchitectureAny operation, which needs to be performed on the data, then can be obtained byproviding a set of control signals. This, for a new operation one only needs to changethe set of control signals.But, how can these control signals by supplied? Let us try to answer this from thedefinition of a program. A program consists of a sequence of steps. Each of these steps,require certain arithmetic or logical or input/output operation to be performed on data.Therefore, each step may require a new set of control signals. Is it possible for us toprovide a unique code for each set of control signals? Well the answer is yes. But whatdo we do with these codes? What about adding a hardware segment, which accepts acode and generates termed as Control Unit (CU). This, a program now consists of asequence of codes. This machine is quite flexible, as we only need to provide a newsequence of codes for a new program. Each code is, in effect, and instruction, for thecomputer The hardware interprets each of these instructions and generates respectivecontrol signals,The Arithmetic Logic Unit (ALU) and the Control Unit (CU) together are termed as theCentral Processing Unit (CPU). The CPU is the moist important component of acomputer’s hardware. The ALU performs the arithmetic operations such as addition,subtraction, multiplication and division, and the logical operations such as: “Is A B?”(Where A and B are both numeric or alphanumeric data), “Is a given character equal toM (for male) or F (for female)?” The control unit interprets instructions and produces therespective control signals.All the arithmetic and logical Operations are performed in the CPU in special storageareas called registers. ‘The size of the register is one of the important consideration indetermining the processing capabilities of the CPU. Register size refers to the amount ofinformation that can be held in a register at a time for processing. The larger theregister size, the faster may be the speed o processing. A CPU’s processing power ismeasured in Million Instructions Per Second (MIPS). The performance of the CPU wasmeasured in milliseconds (one thousand of a second) on the first generation computers,in microseconds (one millionth of a second) on second-generation computers, and isexpected to be measured in Pico-seconds (one 1000th of a nano-second) in the latergenerations. How can the instruction and data be put into the computers? An externalenvironment supplies the instruction and data, therefore, an input module is needed.The main responsibility of input module will be to put the data in the form of signalsthat can be recognized by the system. Similarly, we need another component, which willreport the results in the results in proper format and form. This component is calledoutput module. These components are referred together as input/output (I/O)components. In addition, to transfer the information, the computer system internallyneeds the system interconnections. Most common input/output devices are keyboard,monitor and printer, and the most common interconnection structure is the Busstructure.

Paper Name: Computer Organization and ArchitectureAre these two components sufficient for a working computer? No, because input devicescan bring instructions or data only sequentially and a program may not be executedsequentially as jump instructions are normally encountered in programming. Inaddition, more than one data elements may be required at a time. Therefore, atemporary storage area is needed in a computer to store temporarily the instructionsand the data. This component is referred to as memory. It was pointed out by vonNeumann that the same memory can be used or storing data and instructions. In suchcases the data can be treated as data on which processing can be performed, whileinstructions can be treated as data, which can be used for the generation of controlsignals.The memory unit stores all the information in a group of memory cells, also calledmemory locations, as binary digits. Each memory location has a unique address andcan be addressed independently. The contents of the desired memory locations areprovided to the central processing unit by referring to the address of the memorylocation. The amount of information that can be held in the main memory is known asmemory capacity. The capacity of the main memory s measured in Kilo Bytes (KB) orMega Bytes (B). One-kilo byte stands for 210 bytes, which are 1024 bytes (orapproximately 1000 bytes). A mega byte stands for 220 bytes, which is approximatelylittle over one million bytes. When 64-bit CPU's become common memory will start to bespoken about in terabytes, petabytes, and exabytes. One kilobyte equals 2 to the 10th power, or 1,024 bytes. One megabyte equals 2 to the 20th power, or 1,048,576 bytes. One gigabyte equals 2 to the 30th power, or 1,073,741,824 bytes. One terabyte equals 2 to the 40th power, or 1,099511,627,776 bytes. One petabyte equals 2 to the 50th power, or 1,125,899,906,842,624 bytes. One exabyte equals 2 to the 60th power, or 1,152,921,504,606,846,976 bytes. One zettabyte equals 2 to the 70th power, or te: There is some lack of standardization on these terms when applied to memory anddisk capacity. Memory specifications tend to adhere to the definitions above whereasdisk capacity specifications tend to simplify things to the 10th power definitions(kilo 103, mega 106, giga 109, etc.) in order to produce even numbers. Let us summarize the key features of a von Neumann machine. The hardware of the von Neumann machine consists of a CPU, which includesan ALU and CU. A main memory system An Input/output system The von Neumann machine uses stored program concept, e.g., the program anddata are stored in the same memory unit. The computers prior to this idea used

Paper Name: Computer Organization and Architectureto store programs and data on separate memories. Entering and modifying theseprograms were very difficult as they were entered manually by setting switchesand plugging and unplugging. Each location of the memory of von Neumann machine can be addressedindependently. Execution of instructions in von Neumann machine is carried out in asequential fashion (unless explicitly altered by the program itself) from oneinstruction to the next.The following figure shows the basic structure of von Neumann machine. A vonNeumann machine has only a single path between the main memory and controlunit (CU). This feature/ constraint is refereed to as von Neumann bottleneck.Several other architectures have been suggested for modern computersvon Neumann Machine.1.4 Attributed to John von Neumann Treats Program and Data equally One port to Memory . Simplified Hardware "von Neumann Bottleneck" (rate at which data and program can get into theCPU is limited by the bandwidth of the interconnect)HISTORY OF COMPUTERSBasic information about the technological development trends in computer in the pastand its projections in the future. If we want to know about computers completely thenwe must start from the history of computers and look into the details of varioustechnological and intellectual breakthrough. These are essential to give us the feel ofhow much work and effort has been done to get the computer in this shape.The ancestors of modern

Paper Name: Computer Organization and Architecture SYLLABUS 1. Introduction to Computers Basic of Computer, Von Neumann Architecture, Generation of Computer, . “Computer System Architecture”, John. P. Hayes. 2. “Computer Architecture and parallel Processing “, Hwang K. Briggs. 3. “Computer System Architecture”, M.Morris Mano.

At Your Name Name above All Names Your Name Namesake Blessed Be the Name I Will Change Your Name Hymns Something about That Name His Name Is Wonderful Precious Name He Knows My Name I Have Called You by Name Blessed Be the Name Glorify Thy Name All Hail the Power of Jesus’ Name Jesus Is the Sweetest Name I Know Take the Name of Jesus

CAPE Management of Business Specimen Papers: Unit 1 Paper 01 60 Unit 1 Paper 02 68 Unit 1 Paper 03/2 74 Unit 2 Paper 01 78 Unit 2 Paper 02 86 Unit 2 Paper 03/2 90 CAPE Management of Business Mark Schemes: Unit 1 Paper 01 93 Unit 1 Paper 02 95 Unit 1 Paper 03/2 110 Unit 2 Paper 01 117 Unit 2 Paper 02 119 Unit 2 Paper 03/2 134

1.1 BASIC COMPUTER ORGANIZATION: Most of the computer systems found in automobiles and consumer appliances to personal computers and main frames have some basic organization. The basic computer organization has three main components: CPU Memory subsystem I/O subsystem. The generic organization of these components is shown in the figure below .

CS31001 COMPUTER ORGANIZATION AND ARCHITECTURE Debdeep Mukhopadhyay, CSE, IIT Kharagpur References/Text Books Theory: Computer Organization and Design, 4th Ed, D. A. Patterson and J. L. Hennessy Computer Architceture and Organization, J. P. Hayes Computer Architecture, Berhooz Parhami Microprocessor Architecture, Jean Loup Baer

COMPUTER ORGANIZATION (3-1-0 ) . Computer System Architecture, Morris Mano, PHI Reference Books: 1. Computer Architecture & Organization, William Stallings, Pearson Prerequisite 1. Knowledge of digital circuit 2. Functionality of various gates . Computer Architecture and Organization, by - John P. Hayes, 3rd Edition, Mc Graw Hill .

Paper output cover is open. [1202] E06 --- Paper output cover is open. Close the paper output cover. - Close the paper output cover. Paper output tray is closed. [1250] E17 --- Paper output tray is closed. Open the paper output tray. - Open the paper output tray. Paper jam. [1300] Paper jam in the front tray. [1303] Paper jam in automatic .

1. Computer Fundamentals by P.K.Sinha _ Unit I: Introduction to Computers: Introduction, Definition, .Characteristics of computer, Evolution of Computer, Block Diagram Of a computer, Generations of Computer, Classification Of Computers, Applications of Computer, Capabilities and limitations of computer. Unit II: Basic Computer Organization:

Studi Pendidikan Akuntansi secara keseluruhan adalah sebesar Rp4.381.147.409,46. Biaya satuan pendidikan (unit cost) pada Program Studi Akuntansi adalah sebesar Rp8.675.539,42 per mahasiswa per tahun. 2.4 Kerangka Berfikir . Banyaknya aktivitas-aktivitas yang dilakukan Fakultas dalam penyelenggaraan pendidikan, memicu biaya-biaya dalam penyelenggaraan pendidikan. Biaya dalam pendidikan .