The Impact On Healthcare, Policy And Practice From 36 Multi-project .

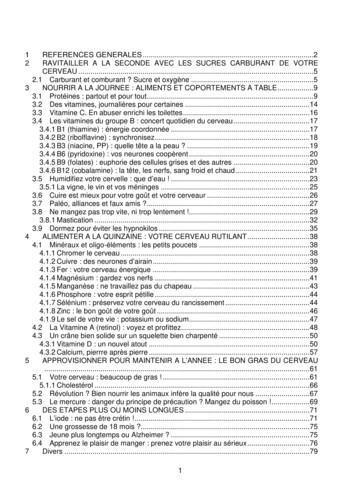

Hanney et al. Health Research Policy and Systems (2017) 15:26DOI 10.1186/s12961-017-0191-yRESEARCHOpen AccessThe impact on healthcare, policy andpractice from 36 multi-project researchprogrammes: findings from two reviewsSteve Hanney1* , Trisha Greenhalgh2, Amanda Blatch-Jones3, Matthew Glover1 and James Raftery4AbstractBackground: We sought to analyse the impacts found, and the methods used, in a series of assessments ofprogrammes and portfolios of health research consisting of multiple projects.Methods: We analysed a sample of 36 impact studies of multi-project research programmes, selected from a widersample of impact studies included in two narrative systematic reviews published in 2007 and 2016. We included impactstudies in which the individual projects in a programme had been assessed for wider impact, especially on policy orpractice, and where findings had been described in such a way that allowed them to be collated and compared.Results: Included programmes were highly diverse in terms of location (11 different countries plus two multi-countryones), number of component projects (8 to 178), nature of the programme, research field, mode of funding, timebetween completion and impact assessment, methods used to assess impact, and level of impact identified.Thirty-one studies reported on policy impact, 17 on clinician behaviour or informing clinical practice, three on acombined category such as policy and clinician impact, and 12 on wider elements of impact (health gain, patient benefit,improved care or other benefits to the healthcare system). In those multi-programme projects that assessed therespective categories, the percentage of projects that reported some impact was policy 35% (range 5–100%), practice32% (10–69%), combined category 64% (60–67%), and health gain/health services 27% (6–48%).Variations in levels of impact achieved partly reflected differences in the types of programme, levels of collaborationwith users, and methods and timing of impact assessment. Most commonly, principal investigators were surveyed;some studies involved desk research and some interviews with investigators and/or stakeholders. Most studies used aconceptual framework such as the Payback Framework. One study attempted to assess the monetary value of aresearch programme’s health gain.Conclusion: The widespread impact reported for some multi-project programmes, including needs-led andcollaborative ones, could potentially be used to promote further research funding. Moves towards greaterstandardisation of assessment methods could address existing inconsistencies and better inform strategic decisionsabout research investment; however, unresolved issues about such moves remain.Keywords: Research impact, Multi-project programmes, Policy impact, Practice impact, Health gains, Monetisation,Payback Framework, Health technology assessment, World Health Report, Global Observatory* Correspondence: stephen.hanney@brunel.ac.uk1Health Economics Research Group (HERG), Institute of Environment, Healthand Societies, Brunel University London, London UB8 3PH, United KingdomFull list of author information is available at the end of the article The Author(s). 2017 Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, andreproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link tothe Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication o/1.0/) applies to the data made available in this article, unless otherwise stated.

Hanney et al. Health Research Policy and Systems (2017) 15:26BackgroundThe World Health Report 2013 argued that “adding to theimpetus to do more research is a growing body of evidenceon the returns on investment” [1]. While much of the evidence on the benefits of research came originally fromhigh-income countries, interest in producing such evidenceis spreading globally, with examples from Bangladesh [2],Brazil [3], Ghana [4] and Iran [5] published in 2015–2016.Studies typically identify the impacts of health research inone or more of categories such as health policy, clinicalpractice, health outcomes and the healthcare system. Individual research impact assessment studies can providepowerful evidence, but their nature and findings varygreatly [6–9] and ways to combine findings systematicallyacross studies are being sought.Previous reviews of studies assessing the impact ofhealth research have analysed the methods and frameworks that are being developed and applied [6, 8–13].An additional question, which has to date received lessattention, is what level of impact might be expectedfrom different types of programmes and portfolios ofhealth research.This paper describes the methods used in two successive comprehensive reviews of research impact studies,by Hanney et al. [6] and Raftery et al. [9], and justifies asample of those studies for inclusion in the current analysis. We also consider the methodological challenges ofseeking to draw comparisons across programmes that gobeyond summing the impacts of individual projectswithin programmes. Importantly, programmes wouldneed to be comparable in certain ways for such crossprogramme comparisons to be legitimate.For this paper, we deliberately sought studies thathad assessed the impact of all projects in multiproject programmes, whether coordinated or not. Wefocused on such multi-project programmes becausethis approach offered the best opportunities for meaningful comparisons across programmes both of themethods and frameworks most frequently used forimpact assessment and, crucially, of the levels of impact achieved and some of the factors associated withsuch impact. Furthermore, such an approach focusedattention on the desirability of finding ways to introduce greater standardisation in research impact assessment. However, we also discuss the severelimitations on how far this analysis can be taken. Finally, we consider the implications of our findings forinvestment in health research and development andthe methodology of research on research impact.MethodsThe methods used to conduct the two previous reviewson which this study is based [6, 9] are described in Box 1.Page 2 of 21Box 1 Search strategy of two original reviewsThe two narrative systematic reviews of impact assessment studies onwhich this paper is based were conducted in broadly similar ways thatincluded systematic searching of various databases and a range ofadditional techniques. Both were funded by the United KingdomNational Institute for Health Research (NIHR) Health TechnologyAssessment (HTA) Programme.The searches from the first review, published in 2007, were run from 1990to July 2005 [6]. The second was a more recent meta-synthesis of studiesof research impact covering primary studies published between 2005 and2014 [9]. The search strategy used in the first review was adapted to takeaccount of new indexing terms and a modified version by Banzi et al. [11](see Additional file 1: Literature search strategies for the two reviews, for afull description of the search strategies). Although the updated searchstrategy increased the sensitivity of the search, filters were used to improvethe precision and study quality of the results.The electronic databases searched in both studies included: OvidMEDLINE, MEDLINE(R) In-Process, EMBASE, CINAHL, the Cochrane Libraryincluding the Cochrane Methodology Register, Health TechnologyAssessment Database, the NHS Economic Evaluation Database and HealthManagement Information Consortium, which includes grey literature suchas unpublished papers and reports. The first review included additionaldatabases not included in the updated review: ECONLIT, Web ofKnowledge (incorporating Science Citation Index and Social ScienceCitation Index), National Library of Medicine Gateway Databases andConference Proceedings Index.In addition to the standard searching of electronic databases, other methodsto identify relevant literature were used in both studies. This included inthe second review an independent hand-searching of four journals(Implementation Science, International Journal of Technology Assessment inHealth Care, Research Evaluation, Health Research Policy and Systems), alist of known studies identified by team members, reviewing publication listsidentified in major reviews published since 2005, and citation tracking ofselected key publications using Google Scholar.The 2007 review highlighted nine separate frameworks and approachesto assessing health research impact and identified 41 studies describingthe application of these, or other, approaches. The second reviewidentified over 20 different impact models and frameworks (five of themcontinuing or building on ones from the first review) and 110 additionalstudies describing their empirical applications (as single or multiple casestudies), although only a handful of frameworks had proven robust andflexible across a range of examples.For the current study the main inclusion criterion wasstudies that had attempted to identify projects withinmulti-project programmes in which investigators hadclaimed to have made some wider impact, especially onpolicy or practice, and/or for which there was an externalassessment showing such impact. We included only onepaper per impact assessment and therefore, for example,excluded papers that reported in detail on a subset of theprojects included in a main paper. We did not include studies that reported only on the total number of incidents ofimpacts on policy claimed for a whole programme, ratherthan the number of projects claiming to make such impact.We included only those studies where the findings weredescribed in a way that allowed them to be collated withothers, then analysed and presented in a broadly standardised way. This meant, for example, that the categories ofimpacts described by the study had to fit into at least oneof a number of broad categories.We defined the categories as broadly as possible to be inclusive and avoid creating overlapping categories. Following

Hanney et al. Health Research Policy and Systems (2017) 15:26an initial scan of the available studies we identified fourimpact categories that were broadly compatible with, butnot necessarily identical to, the impact categories in thewidely-used Payback Framework [14, 15] and the CanadianAcademy of Health Sciences adaptation of that framework[10]. The categories were impact on health policy or on ahealthcare organisation, informing practice or clinicianbehaviour, a combined category covering policy andclinician impact, and impact on health gain, patient benefit,improved care or other benefits to the healthcare system.Studies were included if they had presented findings inone or more of these categories in a way that couldallow standardised comparison across programmes. Insome cases, the studies presented findings solely interms of the numbers of projects that had claimed orbeen shown to have had impact in a particular category.These had to be standardised and presented as percentages. Each study was given the same weight in the analysis, irrespective of the number of individual projectscovered by the study. For each of the four categories ofimpacts we then calculated the median figure for thosestudies showing the percentage of projects that hadclaimed to make an impact in that category. We alsopresented the full range of percentages in each category.We extracted data on methods and conceptual frameworks for assessment of research impact described in eachstudy, and on categories of factors considered by the authors to be relevant for the level of impact achieved. Inidentifying the latter, our approach was informed by arange of international research literature, in particular the1983 analysis by Kogan and Henkel of the importance ofresearchers and potential users working together in a collaborative approach, the role of research brokers, and thepresence of bodies that are ready to receive and use theresearch findings [16, 17]. Other papers on these and related themes that influenced our approach to the analysisincluded literature related to North and Central America[18–21], Africa [22], the European Union [23], and theUnited Kingdom [6, 14, 24], as well as international studies and reviews [25–31].ResultsThirty-six studies met the inclusion criteria for this analysis [6, 32–66]. These were highly diverse in terms ofthe location of the research, nature and size of the funder’s research programme or portfolio, the fields of research and modes of funding, time between completionof the programme and impact assessment, the methods(and sometimes conceptual frameworks) used to assessthe impact, and levels of impact achieved. A brief summary of each study is provided in Table 1.The studies came from 11 different countries, plus aEuropean Union study and one covering various locationsin Africa. The number of projects supplying data to thePage 3 of 21studies ranged from just eight in a study of an occupational therapy research programme in the UnitedKingdom [59], to 22 operational research projects inGuatemala [35], 153 projects in a range of programmeswithin the portfolio of the Australian National BreastCancer Foundation [38], and 178 projects from the HongKong Health and Health Services Research Fund [51].In terms of the methods used to gather data about theprojects in a programme, 21 of the 36 studies surveyedthe researchers, usually just each project’s Principal orChief Investigator (PIs), either as the sole source of dataor combined with other methods such as documentary review, interviews and case studies. Six studies relied exclusively, or primarily, on documentary review and deskanalysis. In at least three studies, interviewing all PIs wasthe main method or key starting point used to identify further interviewees. The picture is complicated because somestudies used one approach, usually surveys, to gain information about all projects, and then supplemented that withother approaches for selected projects on which case studies were additionally conducted, and often involved interviews with PIs. In total, over a third of the studies involvedinterviews with stakeholders, again sometimes in combination with documentary review. Many studies drew on arange of methods, but two examples illustrate a particularlywide range of methods. In the case of Brambila et al. [35] inGuatemala, this included site visits which were used to support key informant interviews. Hera’s [46] assessment of theimpact of the Africa Health Systems Initiative Support toAfrican Research Partnerships involved a range of methods.These included documentary review, and programme levelinterviews. Project level information was obtained fromworkshops for six projects and from a total of 12 interviewsfor the remaining four projects. In addition, they used participant observation of an end-of-programme workshop, atwhich they also presented some preliminary findings. Inthis instance, while the early timing of the assessmentmeant that it was unable to capture all the impact, the programme’s interactive approach led to some policy impactduring the time the projects were underway.In 20 of the 36 studies, the various methods used wereorganised according to a named conceptual framework(see Hanney et al. [6] and Raftery et al. [9] for a summary of all these frameworks); 16 of the 36 studies drewpartly or wholly on the Payback Framework [15]. Aseries of existing named frameworks each informed oneof the 36 studies, and included the Research ImpactFramework [24], applied by Caddell et al. [37]; theCanadian Academy of Health Sciences framework [10],applied by Adam et al. [32]; the Banzi Research Impactmodel [11], applied by Milat et al. [53]; and the BeckerMedical Library model [67], applied by Sainty [59].In addition, various studies were identified as drawing, at least to some degree, on particular approaches,

Semi-directive interviews withstakeholders affected by therecommendations (14); casestudies used surveys inhospitals to examine impact ofthe recommendations (13)No framework stated, butapproach to scoring impactfollowed earlier studies of theCETS in Quebec reported byJacob et al. [47, 48]Key informant (KI) interviews;document review; site visits tohealth centres and nongovernmental organisationsimplementing operational research interventions; scored 22projects (out of 44 conductedbetween 1988 and 2001) onindicators: 14 process; 11 impact; 6 contextAlberta Heritage Fund forMedical Research – HealthresearchFrench Committee for theAssessment and Disseminationof Technological Innovations(CEDIT) – Health technologyassessment (HTA)Population Council –Programme of OperationResearch projects inreproductive health inGuatemalaAlberta Heritage Fund forMedical Research, 2003 [33];Alberta, CanadaBodeau-Livinec et al., 2006[34]; FranceBrambila et al., 2007 [35];GuatemalaSurvey to PIs (100, 50responded, 50%); interviewswith decision makers andusersVersion of Payback FrameworkBibliometric analysis; surveysto researchers(99, 70 responded, 71%);interviews – researchers (15),decision-makers (8); in-depthcase study of translationpathwaysCanadian Academy of HealthSciences frameworkCatalan Agency for HealthInformation, Assessment andQuality – Clinical and healthservices researchAdam et al., 2012 [32];Catalonia, SpainMethods for assessing healthresearch impact/concepts andtechniquesProgramme/specialityAuthor, date, locationTable 1 Thirty-six impact assessment studies: methods, frameworks, findings, factors linked to impact achievedHighlighted how impact canarise from a long-term approach and the several 5-yearcycles of funding “allowed forthe accumulation of evidence inaddition to the development ofcollaborative ties between researchers and practitioners,which ultimately resulted inMain factor fosteringcompliance withrecommendations “appears tobe a system of regulation” ([34],p.166) Reviewed other studies:“All these experiences togetherwith our own work suggest thatthe impact of HTA on practicesand introduction of newtechnologies is higher the morecircumscribed is the target ofthe recommendation” ([34], p.167)Widespread interest, “used asdecision-making tools by administrative staff and as a negotiating instrument by doctorsin their dealings with management.ten of thirteen recommendations had an impact onthe introduction of technologyin health establishments” ([34],p. 161); 7 considerable,3 moderate: total 77%Of the 22, 13 projectsintervention effective inimproving results, threeinterventions not effective; in14 studies implementingagency acted on results; nineinterventions scaled up insame organisation; fiveadopted by anotherorganisation in Guatemala;Research teams with decisionmakers or users more successful than those withoutInteractions and participationof healthcare and policydecision-makers in the projectswere crucial to achieving impact; the study showed thatthe Agency achieved the aimof filling a gap in local knowledge needs; study provideduseful lessons for informingthe funding agency’s subsequent action; the studies “provide reasons to advocate fororiented research to fill specificknowledge gaps” ([32], p. 327)Factors associated with level ofimpact; comments onmethods and use of thefindings49% impact on policy; 39%changed behaviour; 40%health sector benefitsOverall, 40 principalinvestigators (PIs) (of the 70)gave 50 examples of changes;examples included 12organisational changes of thecentre/institution; two publichealth management; twolegal/regulatory (some PIsmight have given more thanone of these: therefore, totalfor organisational/management/policy changes:possibly 17–23%, and 20%figure used in this analysis); 29of the 70 (41%): changedclinical practiceImpacts foundHanney et al. Health Research Policy and Systems (2017) 15:26Page 4 of 21

NHS North Thames Region –Wide-ranging responsivemode R&D programmeIWK Health Centre, Halifax,Canada, Research OperatingGrants (small grants) – Womenand children’s healthNational Breast CancerFoundation – Wide range ofprogrammesEuropean Union FrameworkProgrammes 5, 6, and 7 –Public health projectsBuxton et al., 1999 [36];United KingdomCaddell et al., 2010 [37];CanadaDonovan et al., 2014[38];AustraliaExpert Panel for HealthDirectorate of the EuropeanCommission’s ResearchInnovation Directorate General,2013 [39]; European UnionDocumentary review: all 70completed projects; 120ongoing; KI interviews withparticularly successful andunderperforming projects (16);Documentary analysis,bibliometrics, survey of PIs(242, 153 responded, 63%), 16case studies, cross-caseanalysisPayback FrameworkOnline questionnaire to PIsand co-investigators (Co-Is)(64, 39 responded, 61%)Research Impact Framework:adaptedQuestionnaires to PIs (164, 115responded, 70%) and somebibliometric analysis for allprojects and case studies (19);case studies includedinterviews with researchersand some usersBenefit scoring system basedon two criteria (importance ofthe research to the changes,and level at which the changewas made) was used to scorequestionnaire responses aboutthe impacts and re-score theimpact from each study onwhich a case study conductedPayback FrameworkDeveloped an approachinvolving process, impact andcontextual factors; drew onliterature such as Weiss [18]and interactive approachesUsed documentary review,therefore for completedprojects had data about wholeset; however, “Extensive followup of the post-project impact ofBasic research – more impacton knowledge and drugdevelopment; applied research– greater impact in otherpayback categories; manyprojects had only recentlybeen completed – moreimpact expected; in launchingthe report the charityhighlighted how it wasinforming their fundingstrategy [92]10% impact on policy – 29%expected to do so; 11%contributed to productdevelopment; 14% impact onpractice/behaviour – 39%expected to do soAppendix 1: only 6 out of the70 completed projects did notachieve the primary intendedoutput; 42% took actions toengage or informAn association betweenpresenting at conferences andpractice impacts; authors stresslink between research andexcellence in healthcare: “It isessential that academic healthcentres engage actively inensuring that a culture ofresearch inquiry is maintained”([37], p. 4)The survey/case studycomparison suggests “greaterdetail and depth of the casestudies often leads to asomewhat different judgementof payback, but there is noevidence of a systematic underassessment of payback from thequestionnaire approach, nor,generally, of greatly exaggeratedclaims being made by researchers in the self-completedquestionnaires” ([36], p. 196)changes to the service deliveryenvironments” ([35], p. 242)16% policy impact: 8% inhealth centre, 8% beyond; 32%said resulted in a change inclinical practice; 55% informedclinical practice by providingbroader clinical understandingand increased awareness(average of 43% for practiceimpact); 46% improved qualityof care41% impact on policy; 43%change in practitioner/manager behaviour; 37% ledto benefits to health andhealth servicesome studies led to policychanges, mainly at theprogramme level (total 64%impact in combined policyand practice category)Table 1 Thirty-six impact assessment studies: methods, frameworks, findings, factors linked to impact achieved (Continued)Hanney et al. Health Research Policy and Systems (2017) 15:26Page 5 of 21

NHS Northern and YorkshireRegion – Health ServicesResearch (HSR) (two otherprogrammes not includedhere)Agency for HealthcareResearch and Quality –Integrated delivery systemsresearch networkRobert Wood JohnsonFoundation – Active livingresearchFerguson et al., 1998 [40];United KingdomGold & Taylor, 2007 [41];United States of AmericaGutman et al., 2009 [42];United States of AmericaSuccess factors: responsivenessof project work to deliverysystem needs, ongoingfunding, development of toolsthat helped users see theiroperational relevanceOnly 16% of grants had beencompleted prior to the year ofthe evaluation; someapproaches “worked well,including developing amultifaceted, ongoing,interactive relationship withadvocacy and policymakerorganizations” ([42], p. S32);grantees who completed bothinterviews and surveysgenerally gave similarresponses, but researchersincluded in the randomsample of interviewees gavehigher percentage of policyimpact than researcherssurveyed; questions slightlydifferent in the interviews thanin the surveysChanges in operations; “Of the50 completed projects studied,30 had an operational effect oruse” [41] (Operational effect oruse is a broad term: so the60% put into our combinedimpact category)Generally thought to be tooearly for much policy impact,but 25% of survey, 43% ofinterviewees reported a policyimpact; however, policyimpact in survey could befrom active living research ingeneral, not just the specificprogramme, and couldinclude: “a specific interactionwith policymakers (e.g.testifying, meeting withpolicymakers, policymakerbriefings, etc.) or direct evidenceof the research findings in awritten policy” ([42], p. S33)Documentary review ofprogramme as a whole andindividual projects (50);descriptive interviews (85); fourcase studies, additionalinterviewsNo explicit frameworkdescribedA retrospective, in-depth, descriptive study utilising multiple methods; quantitativedata derived primarily from aweb-based survey of granteeinvestigators (PIs, Co-PIs), ofthe 74 projects: 68 responsesanalysed; qualitative data from88 interviews with KIsThe conceptual model used inthe programme “was used toguide the evaluation” ([42], p.S23).Aspects of Weiss's model usedfor analysing policycontributionscompleted projects was notpossible” ([39] p. 9)Comprehensive coverage of aprogramme without requiringadditional data from theresearchers; however, alsoshows the limitations of suchan approach in capturing laterimpactsThis was part of a wideranalysis, but in all three areasthe projects were reactive;particularly difficult to make animpact with Primary andCommunity Care researchpolicymakers; 4 (6%) projectschange of policy, 22%expected to do so; 7 (10%)impact on health practitioners;6 (9%) impact on healthservice delivery and 6 (9%)impact on health; 1 beneficialimpact on small/medium-sizedenterpriseFive HSR projects (16%) had apolicy impact, i.e. “Betterinformed commissioning andcontracting” ([40] p. 17); 5(16%) led to a change in NHSpractice, i.e. “More effectivetreatment, screening ormanagement for patients” ([40],p. 16)Desk analysis (bibliometrics),surveys to gather quantitativeand qualitative data sent to allPIs and Co-Is in all three programmes: but only HSR projects asked about policy, sojust the 32 HSR responses analysed hereRefer to Payback Framework;no attempt to develop owndata extraction formconstructed based on thecategories from the PaybackFramework, with each of themain categories broken downinto a series of specificquestionsPayback FrameworkTable 1 Thirty-six impact assessment studies: methods, frameworks, findings, factors linked to impact achieved (Continued)Hanney et al. Health Research Policy and Systems (2017) 15:26Page 6 of 21

Multiple methods: literaturereview, funder documents,survey all PIs of projectsbetween 1993 and 2003 (204,133 responses, 65%), casestudies with interviews (16)Payback FrameworkSurvey of all PIs (153, 96responses, 59%), documents,case studies (14) involvinginterviews and someexpanding the approach tocover role of chairs and centrePayback FrameworkNational Health Service (NHS)– HTA programmeAsthma UK – All programmesof Asthma researchAfrica Health Systems InitiativeSupport to African ResearchPartnershipsHanney et al., 2007 [6];United KingdomHanney et al., 2013 [45];United KingdomHera, 2014 [46]; AfricaDocumentary review;interviews at programme level;project level information – forsix projects, workshops, for theremaining four a total of 12interviews; participantInterviews with thoserequesting the 20 brief HTAnotes (i.e. reviews); checks onquality of the reports madeusing desk analysis andcomments from expertsNo framework describedCanadian province (not stated)– HTA brief tech notesHailey et al., 2000 [44]; CanadaLooked at technologies (20)covered by HTA reports fromthe panel up to end of 1988.Little provided on methods –presumably desk analysis, juststates comparingrecommendations,assessments and policyactivitiesNo framework describedNational Health TechnologyAdvisory Panel – HTA reportsHailey et al., 1990 [43];AustraliaPolicy impact was createdduring the research process: 7out of 10 projects reportedpolicy impact already, “Thepolicy dialogue is not yet13% impact on policy; 17%product development; 6%health gain; but case studiesreveal some importantexamples of influence onguidelines, some potentiallymajor breakthroughs inasthma therapies,establishment of pioneeringcollaborative research centreTechnology AssessmentReports (TARs) produced forthe National Institute forHealth and Clinical Excellence(NICE): 96% impact on policy,60% on clinician behaviour;primary and secondary HTAresearch: 60% impact onpolicy, 31% on behaviourAverage for programme: 73%impact on policy, 42% onbehaviour; case studiesshowed large diversity inlevels and forms of impactsand the way in which theyarise14 (70%) had influence onpolicy and other decisionsOut of the first 20technologies covered by HTAreports there had beensignificant impact in 11 andprobable influence in three:70% in totalTable 1 Thirty-six impact assessment studies: methods, frameworks, findings, factors linked to impact achieved (Continued)“Research teams who startedthe policy dialogue early andmaintained it throughout thestudy, and teams that engagedwith decision-makers at locallevel, district and national levelsMany types of research andmodes of funding – long-termfunding of chairs led to

between completion and impact assessment, methods used to assess impact, and level of impact identified. Thirty-one studies reported on policy impact, 17 on clinic ian behaviour or informing clinical practice, three on a combined category such as policy and clinician impact, and 12 on wider elements of impact (health gain, patient benefit,

May 02, 2018 · D. Program Evaluation ͟The organization has provided a description of the framework for how each program will be evaluated. The framework should include all the elements below: ͟The evaluation methods are cost-effective for the organization ͟Quantitative and qualitative data is being collected (at Basics tier, data collection must have begun)

Silat is a combative art of self-defense and survival rooted from Matay archipelago. It was traced at thé early of Langkasuka Kingdom (2nd century CE) till thé reign of Melaka (Malaysia) Sultanate era (13th century). Silat has now evolved to become part of social culture and tradition with thé appearance of a fine physical and spiritual .

̶The leading indicator of employee engagement is based on the quality of the relationship between employee and supervisor Empower your managers! ̶Help them understand the impact on the organization ̶Share important changes, plan options, tasks, and deadlines ̶Provide key messages and talking points ̶Prepare them to answer employee questions

Dr. Sunita Bharatwal** Dr. Pawan Garga*** Abstract Customer satisfaction is derived from thè functionalities and values, a product or Service can provide. The current study aims to segregate thè dimensions of ordine Service quality and gather insights on its impact on web shopping. The trends of purchases have

On an exceptional basis, Member States may request UNESCO to provide thé candidates with access to thé platform so they can complète thé form by themselves. Thèse requests must be addressed to esd rize unesco. or by 15 A ril 2021 UNESCO will provide thé nomineewith accessto thé platform via their émail address.

Chính Văn.- Còn đức Thế tôn thì tuệ giác cực kỳ trong sạch 8: hiện hành bất nhị 9, đạt đến vô tướng 10, đứng vào chỗ đứng của các đức Thế tôn 11, thể hiện tính bình đẳng của các Ngài, đến chỗ không còn chướng ngại 12, giáo pháp không thể khuynh đảo, tâm thức không bị cản trở, cái được

Food outlets which focused on food quality, Service quality, environment and price factors, are thè valuable factors for food outlets to increase thè satisfaction level of customers and it will create a positive impact through word ofmouth. Keyword : Customer satisfaction, food quality, Service quality, physical environment off ood outlets .

Le genou de Lucy. Odile Jacob. 1999. Coppens Y. Pré-textes. L’homme préhistorique en morceaux. Eds Odile Jacob. 2011. Costentin J., Delaveau P. Café, thé, chocolat, les bons effets sur le cerveau et pour le corps. Editions Odile Jacob. 2010. Crawford M., Marsh D. The driving force : food in human evolution and the future.