The Mathematical Foundations Of Policy Gradient Methods

The Mathematical Foundations ofPolicy Gradient MethodsSham M. KakadeUniversity of Washington&Microsoft Research

Reinforcement (interactive) learning (RL):

Markov Decision Processes:a frameworkfor sRL dS states.start with00A actions. A policy:𝜋: States Actionsdynamics model P(s 0 s, a). We execute𝜋 to robtainrewardfunction(s) a trajectory:𝑠!, 𝑎!, 𝑟!, 𝑠", 𝑎", 𝑟", discount factor Total 𝛾-discounted reward:Stochastic policy : st !# atSutton, Barto ’18&𝑉 (𝑠!) 𝐸 9 𝛾 𝑟 𝑠!, 𝜋Standard objective: find which %!maximizes:V (s0 ) E[r (s0 ) r (s1 ) 2r (s2 ) . . .]Goal: Find a policy that maximizes our value, 𝑉 ! (𝑠" ).where the distribution of st , at is induced by .

Dexterous Robotic Hand ManipulationOpenAI, Oct 15, 2019Challenges in RL1. Exploration(the environment may beunknown)2. Credit assignment problem(due to delayed rewards)3. Large state/action spaces:hand state: joint angles/velocitiescube state: configurationactions: forces applied to actuators

Values, State-Action Values, and Advantages%𝑉 ! (𝑠" ) 𝐸 ' 𝛾 # 𝑟(𝑠# , 𝑎# ) 𝑠" , 𝜋# "%𝑄! (𝑠" , 𝑎" ) 𝐸 ' 𝛾 # 𝑟(𝑠# , 𝑎# ) 𝑠" , 𝑎" , 𝜋# "𝐴! 𝑠, 𝑎 𝑄! 𝑠, 𝑎 𝑉 ! (𝑠) Expectation with respect to sampled trajectories under 𝜋 Have S states and A actions. Effective “horizon” is 1/(1 𝛾) time steps.

The “Tabular” Dynamic Programming approachState 𝒔:(joint angles, cube config, )𝑸𝝅 (𝒔, 𝒂): state-action valueAction 𝒂:(forces at joints)“one step look-ahead value”using 𝝅(31 , 12 , , 8134, )(1.2 Newton, 0.1 Newton, )8 units of reward Table: ‘bookkeeping’ for dynamic programming (with known rewards/dynamics)1. Estimate the state-action value 𝑄! (𝑠, 𝑎) for every entry in the table.2. Update the policy 𝜋 & goto step 1 Generalization: how can we deal with this infinite table?using sampling/supervised learning?

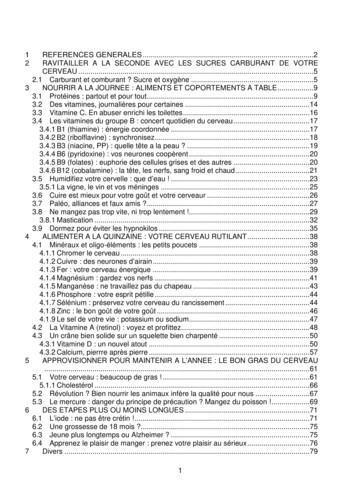

This Tutorial:Mathematical Foundations of Policy Gradient Methods§ Part – I: BasicsA. Derivation and EstimationB. Preconditioning and the Natural Policy Gradient§ Part – II: Convergence and ApproximationA. Convergence: This is a non-convex problems!B. Approximation: How to the think about the role of deep learning?

Part-1: Basics

State-Action Visitation Measures! This helps to clean up notation! “Occupancy frequency” of being in state 𝑠 and action a, after following 𝜋 starting in 𝑠!'𝑑 !! 𝑠 1 𝛾 𝐸 𝛾 % 𝐼 𝑠% 𝑠 𝑠" , 𝜋%&" 𝑑.#! is a probability distribution With this notation:𝑉 # (𝑠!)1 𝐸. 0"#! ,1 # 𝑟(𝑠, 𝑎)1 𝛾

Direct Policy Optimization over Stochastic Policies 𝜋( 𝑎 𝑠 is the probability of action 𝑎 given 𝑠, parameterized by𝜋( 𝑎 𝑠 exp(𝑓( (𝑠, 𝑎)) Softmax policy class: 𝑓( 𝑠, 𝑎 𝜃 ,* Linear policy class: 𝑓( 𝑠, 𝑎 𝜃⃗ 𝜙(𝑠, 𝑎)where 𝜙(𝑠, 𝑎) 𝑅 Neural policy class: 𝑓( (𝑠, 𝑎) is a neural network

In practice, policy gradient methods rule They are the most effective method forobtaining state of the art.𝜃 𝜃 𝜂 𝑉 !A (𝑠" ) Why do we like them? They easily deal with large state/action spaces (through the neural net parameterization) We can estimate the gradient using only simulation of our current policy 𝜋!(the expectation is under the state actions visited under 𝜋! ) They directly optimize the cost function of interest!

Two (equal) expressions for the policy gradient!# 𝑉 𝑠"# 𝑉 𝑠"1#B 𝐸 & ,( ! 𝑄 𝑠, 𝑎 log 𝜋# 𝑎 𝑠1 𝛾1#B 𝐸 & ,( ! 𝐴 𝑠, 𝑎 log 𝜋# 𝑎 𝑠1 𝛾(some shorthand notation above) Where do these expression come from? How do we compute this?

Example: an important special case! Remember the softmax policy class (a “tabular” parameterization)𝜋C 𝑎 𝑠 exp(𝜃D,E ) Complete class with 𝑆𝐴 params:one parameter per state action, so it contains the optimal policy Expression for softmax class: 𝑉 C 𝑠" 𝑑 !2 𝑠 𝜋C 𝑎 𝑠 𝐴C 𝑠, 𝑎 𝜃D,E Intuition: increase 𝜃!,# if the ‘weighted’ advantage is large.

Part-1A: Derivations and Estimation

General Derivation rV (s0 )Xr (a0 s0 )Q (s0 , a0 )a0 X a0 Xa0 a0 Xa0 (a0 s0 )rQ (s0 , a0 ) (a0 s0 ) r log (a0 s0 ) Q (s0 , a0 )a0 r (a0 s0 ) Q (s0 , a0 ) XX (a0 s0 )r r(s0 , a0 ) Xs1 P (s1 s0 , a0 )V (s1 ) (a0 s0 ) r log (a0 s0 ) Q (s0 , a0 ) Xa0 ,s1E [Q (s0 , a0 )r log (a0 s0 )] E [rV (s1 )] . (a0 s0 )P (s1 s0 , a0 )rV (s1 )

SL vs RL: How do we obtain gradients? In supervised learning, how do we compute the gradient of our loss 𝐿(𝜃)?𝜃 𝜃 𝜂 𝐿(𝜃) Hint: can we compute our loss? In reinforcement learning, how do we compute the policy gradient 𝑉 3 (𝑠!)?𝜃 𝜃 𝜂 𝑉 C (𝑠" )# 𝑉 𝑠"1 𝐸 ,( 𝑄 # 𝑠, 𝑎 log 𝜋# 𝑎 𝑠1 𝛾

Monte Carlo Estimation Sample a trajectory: execute 𝜋3 and 𝑠!, 𝑎!, 𝑟!, 𝑠", 𝑎", 𝑟", b t , at )Q(s[ rV 1Xt0 01Xt 0t0tr(st0 t , at0 t )b t , at )r log (at st )Q(s Lemma: [Glynn ’90, Williams ‘92]] This gives an unbiased estimate of the gradient:# 𝑉 (𝑠 )E 𝑉%This is the “likelihood ratio” method.

Back to the softmax policy class 𝜋C 𝑎 𝑠 exp(𝜃D,E ) Expression for softmax class: 𝑉 C 𝑠" 𝑑 !2 𝑠 𝜋C 𝑎 𝑠 𝐴C 𝑠, 𝑎 𝜃D,E What might be making gradient estimation difficult here?(hint: when does gradient descent “effective” stop?)

Part-1B: Preconditioning and theNatural Policy Gradient

A closer look at Natural Policy Gradient (NPG) Practice: (almost) all methods are gradient based, usually variants of:Natural Policy Gradient [K. ‘01]; TRPO [Schulman ‘15]; PPO [Schulman ‘17] NPG warps the distance metric to stretch the corners out (using the Fisherinformation metric) move ‘more’ near the boundaries. The update is:𝐹 𝜃 𝐸. 0# ,1 # log 𝜋3 𝑎 𝑠 log 𝜋3 𝑎 𝑠𝜃 𝜃 𝜂𝐹 𝜃5" 𝑉 3 (𝑠 )!4

TRPO (Trust Region Policy Optimization) TRPO [Schulman ‘15] (related: PPO [Schulman ‘17]):move staying “close” in KL to previous policy:𝜃 6" argmin3 𝑉 3 (𝑠!)s. t. 𝐸. 0# 𝐾𝐿 𝜋 3 𝑠 R 𝜋 3 𝑠 NPG TRPO: they are first order equivalent (and have same practical behavior)

NPG intuition. But first . NPG as preconditioning:𝜃 𝜃 𝜂𝐹 𝜃5" 𝑉 3 (𝑠 )!OR𝜂𝜃 𝜃 𝐸 log 𝜋3 𝑎 𝑠 log 𝜋3 𝑎 𝑠1 𝛾4 What does the following problem remind you of?𝐸 𝑋𝑋 7 What is NPG is trying to approximate?5" 𝐸[𝑋𝑌]5" 𝐸 log 𝜋3 𝑎 𝑠 𝐴3 (𝑠, 𝑎)

Equivalent Update Rule (for the softmax) Take the best linear fit of 𝑄 3 in “policy space”-features”: this gives 𝐴3 (𝑠, 𝑎)W.,1 Using the NPG update rule :𝜃.,1 𝜃.,1 𝜂𝐴3 (𝑠, 𝑎)1 γ And so an equivalent update rule to NPG is:𝜋3 𝑎 𝑠 𝜋3𝜂𝑎 𝑠 exp𝐴3 (𝑠, 𝑎) /𝑍1 γ What algorithm does this remind you of?Questions: convergence? General case/approximation?

But does gradient descent even work in RL?Reinforcement LearningSupervised LearningWhat about approximation?Stay tuned!!

Part-2: Convergence andApproximation

The Optimization LandscapeSupervised Learning: Gradient descent tends to ‘justwork’ in practice and is notsensitive to initialization Saddle points not a problem Reinforcement Learning: Local search depends on initialization inmany real problems, due to “very” flatregions. Gradients can be exponentially small inthe “horizon”

Prior work: The Explore/Exploit TradeoffRL and the vanishing gradient problems!Thrun ’92Reinforcement Learning:Randomdoesfindreward Thesearchrandom init.hasnot“very”flattheregionsin realquickly.problems (lack of ‘exploration’) Lemma: [Agarwal, Lee, K., Mahajan random init,theall 𝑘-thhigher-order gradientsare 2# /& in magnitude for up to k H/ ln 𝐻 orders, 𝐻 1/(1 𝛾).[Kearns& Singh, ’02] E 3 isa near-optimal algo. This is a landscape/optimization issues.Sample’03,’17](also acomplexity:statistical issue[K.if weusedAzarrandominit).Model free: [Strehl et.al. ’06; Dann and Brunskill ’15; Szita &Szepesvari ’10; Lattimore et.al. ’14; Jin et.al. ’18]

Part 2:Understanding the convergence properties of the (NPG) policy gradientmethods!§ A: Convergence Let’s look at the tabular/”softmax” case§ B: Approximation§ Approximation: “linear” policies and neural nets

NPG: back to the “soft” policy iteration interpretation Remember the softmax policy class𝜋3 𝑎 𝑠 exp(𝜃.,1 )has 𝑆 𝐴 params At iteration t, the NPG update rule:𝜃 6" 𝜃 𝜂 𝐹 𝜃 5" 𝑉 (𝑠 )!is equivalent to a “soft” (exact) policy iteration update rule:𝜋 6"𝑎 𝑠 𝜋 𝜂𝑎 𝑠 exp𝐴 (𝑠, 𝑎) /𝑍1 γ What happens for this non-convex update rule?

Part-2A: Global Convergence

Provable Global Convergence of NPGTheorem [Agarwal, Lee, K., Mahajan 2019]For the softmax policy class, with 𝜂 1 𝛾 & log 𝐴 ,we have after T iterations,2' 𝑉𝑠% 𝑉 𝑠% 1 𝛾 &𝑇 Dimension free iteration complexity! (No dependence on 𝑆, 𝐴)Also a “FAST RATE”! Even though problem is non-convex, a mirror descent analysis applies.Analysis idea from [Even-Dar, K., Mansour 2009] What about approximate/sampled gradients and large state space?

Notes: Potentials and Progress?

But first, the “Performance Difference Lemma” Lemma: [K’02]: a characterization of the performance gap between any two policies:%𝑉!𝑠" 𝑉!9# !9𝑠" 𝐸E: ,D; ,E; ! ' 𝛾 𝐴 (𝑠# , 𝑎# ) 𝑠"# " Q𝐸D T ,E !QRS!9𝐴𝑠, 𝑎

Mirror Descent Gives a Proof!(even though it is non-convex) ?(t)?(t 1)?Es d KL( s s ) KL( s s) Es d? EXs d? ?(t 1) (a s)? (a s) log (t) (a s)a X ?(t) (a s)A (s, a)1a V (s0 )V (t) (s0 )Es d? log Zt (s)X a (a s) log Zt (s)!

Notes: are we making progress?

Re-arranging?V (s0 ) 1V (t) (s0 ) Es d? KL( s? s(t) ) KL( s? s(t 1) ) log Zt (s)

Understanding progress:V ?(s0 )1 TTX1V (T(V ?1)(s0 )(s0 )V (t) (s0 ))t 01 Es d? (KL( s? s(0) ) Tlog A 1 T TTX1t 0TX11KL( s? s(T ) )) Es d? log Zt (s) T t 0Es d? log Zt (s)

A slow rate proof sketch

The key lemma for the fast rate Es µ log Zt (s) . . . . 1 Es µ V(t 1)(s)V(t)(s)

The fast rate proof!V ?(s0 )V (Tlog A 1 T T1)(s0 )TX1Es d? log Zt (s)t 0log A 1 T(1)TTX1 V (t 1) (d? )t 0log A V (T ) (d? ) V (0) (d? ) T(1)Tlog A 1 .2 T(1) TV (t) (d? )

Part-2B: Approximation(and statistics)

Remember our policy classes: 𝜋( 𝑎 𝑠 is the probability of action 𝑎 given 𝑠, parameterized by𝜋( 𝑎 𝑠 exp(𝑓( (𝑠, 𝑎)) Softmax policy class: 𝑓( 𝑠, 𝑎 𝜃 ,* Linear policy class: 𝑓( 𝑠, 𝑎 𝜃⃗ 𝜙(𝑠, 𝑎)where 𝜙(𝑠, 𝑎) 𝑅 Neural policy class: 𝑓( (𝑠, 𝑎) is a neural network

OpenAI: dexterous hand manipulation not far off?Trained with “domain randomization”Basically: The measure 𝑠! 𝜇 wasdiverse.

Prior work: The Explore/Exploit TradeoffPolicy search algorithms: exploration and start state-measures𝑚𝑎𝑥 𝐸 0 [𝑉 ( 𝑠 ]s"( .Thrun ’92Random search does not find the reward quickly. Idea:Reweightingby a diversedistribution(theory)Balancingthe explore/exploittradeoff:𝜇 to handles the ”vanishing gradient”problem.[Kearns& Singh, ’02] E 3 is a near-optimal algo.Sample’03, Azar Therecomplexity:is sense in[K.whichthis’17]reweighting is related toModel free: [Strehl et.al. ’06; Dann and Brunskill ’15; Szita &Szepesvari ’10; Lattimore et.al. ’14; Jin et.al. ’18]the a “condition number” Related theory: [K. & Langford; ‘02] [K. ‘03] Conservative policy iteration (CPI) has the strongest provableguarantees, in terms of the𝜇 along with the error of a ‘supervisedlearning’ black box.S. M. Kakade (UW)Curiosity4 / 16 Other ‘reductions to SL’ : [Bagnell et al, ‘04], [Scherer & Geist, ‘14], [Geist et al., ‘19], etc helpful for imitation learning: [Ross et al., 2011]; [Ross & Bagnell, 2014]; [Sun et al., 2017 ]

NPG for the linear policy class Now:𝜋3 𝑎 𝑠 exp(𝜃.,1 𝜙.,1 ) Take the best linear fit in “policy space”-features:W argmin 𝐸.! 𝐸.,1 0"#!𝑊 𝜙.,1 𝐴3𝑠, 𝑎 𝜇 is our start-state distribution, hopefully with “coverage”b3 𝑠, 𝑎 𝑊 𝜙 , and the NPG is update is equivalent to: Define 𝐴.,1𝜋3 𝑎 𝑠 𝜋3𝜂 b3𝑎 𝑠 exp𝐴 𝑠, 𝑎1 γ This is like a soft “approximate” policy iteration step./𝑍

Sample Based NPG, linear case Sample trajectories: at iteration t, using start state s! 𝜇, then follow 𝜋 Now do regression on this sampled data: 3 b𝑊 argmin 𝐸c.,1 𝑊 𝜙.,1 𝐴 𝑠, 𝑎 Define:b 𝑠, 𝑎 𝑊b 𝜙.,1𝐴 And so an equivalent update rule to NPG is:𝜋 6"𝑎 𝑠 𝜋 𝜂 b 𝑎 𝑠 exp𝐴 𝑠, 𝑎1 γ/𝑍

Guarantees: NPG for linear policy classes (realizability) Suppose that 𝐴' 𝑠, 𝑎 is a linear function in 𝜙(,* supervised learning error: suppose we have bounded regression error, say due to sampling𝐸Y 𝐴 𝑠, 𝑎 [ 𝑊 𝜙 𝑠, 𝑎& 𝜀 relative condition number: (to opt state-action measure 𝑑 starting from 𝑠- )𝜅 max@𝐸.,1 0 𝜙.,1 𝑥𝐸.,1 A𝜙.,1 𝑥 Theorem [Agarwal, Lee, K., Mahajan 2019]𝐴: # actions. 𝐻: horizon. After 𝑇 iterations, for all 𝑠!, the NPG algorithm satisfies:𝐻 2 log 𝐴3 %𝑉 𝑠! 𝑉 𝑠! 𝐻?𝜅 𝜀𝑇

Sample Based NPG, neural case Now:𝜋3 𝑎 𝑠 exp(𝑓3 (𝑠, 𝑎)) Sampling: at iteration t, sample s! 𝜇 and follow 𝜋, Supervised learning/regression:b argmin 𝐸c.,1𝑊 Define:𝑊 𝑓3 𝑠, 𝑎 𝐴3 𝑠, 𝑎b 𝑠, 𝑎 𝑊b 𝑓3 𝑠, 𝑎𝐴 The NPG is:𝜋 6"𝑎 𝑠 𝜋 𝜂 b 𝑎 𝑠 exp𝐴 𝑠, 𝑎1 γ/𝑍

Guarantees: NPG for linear policy classes (realizability) Suppose that 𝐴' 𝑠, 𝑎 is a linear function in 𝑓' 𝑠, 𝑎 supervised learning error: suppose we have bounded regression error, say due to sampling&' [𝐸(,* / 𝐴 𝑠, 𝑎 𝑊 𝑓' 𝑠, 𝑎 𝜀 relative condition number: (to opt state-action measure 𝑑 starting from 𝑠- )𝜅 max@𝐸.,1 0 𝑓! 𝑠, 𝑎 𝑥𝐸.,1 A 𝑓! 𝑠, 𝑎.,1 𝑥 Theorem [Agarwal, Lee, K., Mahajan 2019]𝐴: # actions. 𝐻: horizon. After 𝑇 iterations, for all 𝑠!, the NPG algorithm satisfies:𝐻 2 log 𝐴3 %𝑉 𝑠! 𝑉 𝑠! 𝐻?𝜅 𝜀𝑇NTK TRPO analysis [Lie et. al ‘19]

Thank you! Today: mathematical foundations of policy gradient methods. With “coverage”, policy gradients have the strongest theoretical guaranteesand are practically effective! New directions/not discussed: design of good exploratory distributions 𝜇 Relations to transfer learning and “distribution shift”RL is a very relevant area, both now and the in the future!With some basics, please participate

Some details for the fast rate!V(t 1) 1(t)(µ) V (µ)X1Es d(t 1) (t 1) (a s)A(t) (s, a)µa(t 1)X1 (a s)Zt (s)(t 1) Es d(t 1) (a s) log(t) (a s)µ a11(t 1)(t) Es d(t 1) KL( s s ) Es d(t 1) log Zt (s)µµ 11Es d(t 1) log Zt (s)Es µ log Zt (s).µ

(also a statistical issue if we used random init). Prior work: The Explore/Exploit Tradeoff Thrun '92 Random search does not find the reward quickly. (theory) Balancing the explore/exploit tradeoff: [Kearns & Singh, '02] E 3 is a near-optimal algo. Sample complexity: [K. '03, Azar '17]

May 02, 2018 · D. Program Evaluation ͟The organization has provided a description of the framework for how each program will be evaluated. The framework should include all the elements below: ͟The evaluation methods are cost-effective for the organization ͟Quantitative and qualitative data is being collected (at Basics tier, data collection must have begun)

Silat is a combative art of self-defense and survival rooted from Matay archipelago. It was traced at thé early of Langkasuka Kingdom (2nd century CE) till thé reign of Melaka (Malaysia) Sultanate era (13th century). Silat has now evolved to become part of social culture and tradition with thé appearance of a fine physical and spiritual .

On an exceptional basis, Member States may request UNESCO to provide thé candidates with access to thé platform so they can complète thé form by themselves. Thèse requests must be addressed to esd rize unesco. or by 15 A ril 2021 UNESCO will provide thé nomineewith accessto thé platform via their émail address.

̶The leading indicator of employee engagement is based on the quality of the relationship between employee and supervisor Empower your managers! ̶Help them understand the impact on the organization ̶Share important changes, plan options, tasks, and deadlines ̶Provide key messages and talking points ̶Prepare them to answer employee questions

Dr. Sunita Bharatwal** Dr. Pawan Garga*** Abstract Customer satisfaction is derived from thè functionalities and values, a product or Service can provide. The current study aims to segregate thè dimensions of ordine Service quality and gather insights on its impact on web shopping. The trends of purchases have

Chính Văn.- Còn đức Thế tôn thì tuệ giác cực kỳ trong sạch 8: hiện hành bất nhị 9, đạt đến vô tướng 10, đứng vào chỗ đứng của các đức Thế tôn 11, thể hiện tính bình đẳng của các Ngài, đến chỗ không còn chướng ngại 12, giáo pháp không thể khuynh đảo, tâm thức không bị cản trở, cái được

Le genou de Lucy. Odile Jacob. 1999. Coppens Y. Pré-textes. L’homme préhistorique en morceaux. Eds Odile Jacob. 2011. Costentin J., Delaveau P. Café, thé, chocolat, les bons effets sur le cerveau et pour le corps. Editions Odile Jacob. 2010. Crawford M., Marsh D. The driving force : food in human evolution and the future.

Le genou de Lucy. Odile Jacob. 1999. Coppens Y. Pré-textes. L’homme préhistorique en morceaux. Eds Odile Jacob. 2011. Costentin J., Delaveau P. Café, thé, chocolat, les bons effets sur le cerveau et pour le corps. Editions Odile Jacob. 2010. 3 Crawford M., Marsh D. The driving force : food in human evolution and the future.